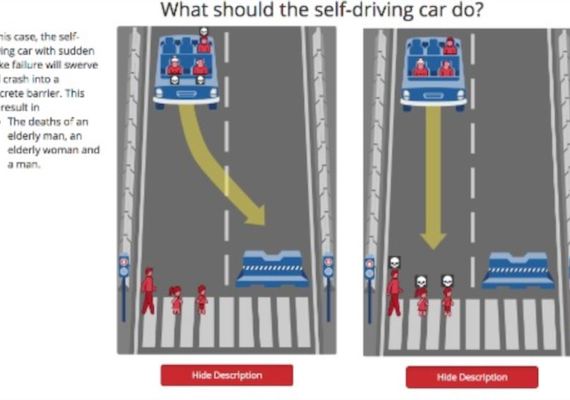

Every time we get behind the wheel of a car, we put our lives and the lives of others at risk. Self-driving cars are designed to reduce those risks by letting technology control our vehicles. Accident rates for self-driving cars have been much lower than the rates for human-driven cars. Google's self-driving car has had only 13 collisions after traveling 1.8 million miles. As humans, we can make moral choices in avoiding accidents. To avoid hitting a child, for example, human drivers might sharply turn a car away from the child even if others might be injured. But what moral choices can self-driving cars make? Researchers at the Massachusetts Institute of Technology, MIT, studied this issue. They have developed the Moral Machine website to help explore the choices self-driving cars should make.

What does collision mean?

an instance of one moving object or person striking violently against another

when a car avoids getting into an accident

a type of driverless car

to drive a car somewhere

How do humans avoid getting into accidents?

driving behind large trucks

changing lanes quickly

driving fast

moral choices

Self-driving cars are designed to reduce those risks by letting technology control our vehicles.

pilots

humans

technology

animals